In the courtroom, the New York Times has taken a hard line against OpenAI. The newspaper sued the artificial intelligence startup alongside investor and partner Microsoft, alleging that OpenAI scraped articles without permission or compensation. The Times wants to hold OpenAI and Microsoft responsible for billions of dollars in damages.

At the same time, however, the Times is also embracing OpenAI’s generative AI technology.

The Times wants to hold OpenAI and Microsoft responsible for billions of dollars in damages.

The Times’s use of the technology came to light thanks to leaked code showing that it developed a tool that would use OpenAI to generate headlines for articles and “help apply The New York Times styleguide” — performing functions that, if applied in the newsroom, are normally undertaken by editors at the newspaper.

“The project you’re referring to was a very early experiment by our engineering team designed to understand generative A.I. and its potential use cases,” Times spokesperson Charlie Stadtlander told The Intercept. “In this case, the experiment was not taken beyond testing, and was not used by the newsroom. We continue to experiment with potential applications of A.I. for the benefit of our journalists and audience.”

Media outlets are increasingly turning to artificial intelligence — using large language models, which learn from ingesting text to then generate language — to handle various tasks. AI can be employed, for example, to sort through large data sets.

More public-facing uses of AI have sometimes resulted in embarrassment. Sports Illustrated deleted posts on its site after its readers exposed that some of its authors were AI-generated.

Some newsroom applications for AI are furtive, but in some cases, outlets publicize their AI work. Newsweek, for instance, announced an expansive, if nebulous, embrace of AI.

Like other outlets, the Times has not been shy about its use of AI. The website for the paper’s Research and Development team says, “Artificial Intelligence and journalism intersect in our reporting, editing and engagement with readers.” The Times site highlights 24 use cases of AI at the company; the style guide and headline project aren’t listed among them.

Because of its regurgitative way of operating, many view AI in newsrooms with skepticism. As the media industry sheds jobs — more than 20,000 positions were lost in 2023 — there are also worries that AI could take away even more roles for journalists. In 2017, the Times eliminated its copy edit desk, shifting some of the copy editors into other roles. The desk used to be responsible for enforcing the style guide, one of the tasks the publication tested with AI.

The Times code became public last month when an anonymous user on the 4chan bulletin board posted a link to a collection of thousands of the New York Times’s GitHub repositories, essentially storage of collections of code for collaborative purposes. A text file in the leak said the material constitutes a “more or less complete clone of all repositories of The New York Times on GitHub.”

The Times confirmed the authenticity of the leak in a statement to BleepingComputer, saying the code was “inadvertently made available” in January.

The leak contains more than 6,000 repositories totaling more than 3 million files. It consists of a wide-ranging collection of materials covering the engineering side of the Times, but little from the newsroom or business side of the organization appears to be included in the leak.

The Times has spent $1 million on its suit alleging copyright infringement against OpenAI and Microsoft. The Intercept is engaged in a separate lawsuit against OpenAI and Microsoft under the Digital Millennium Copyright Act.

“Do Not Improvise”

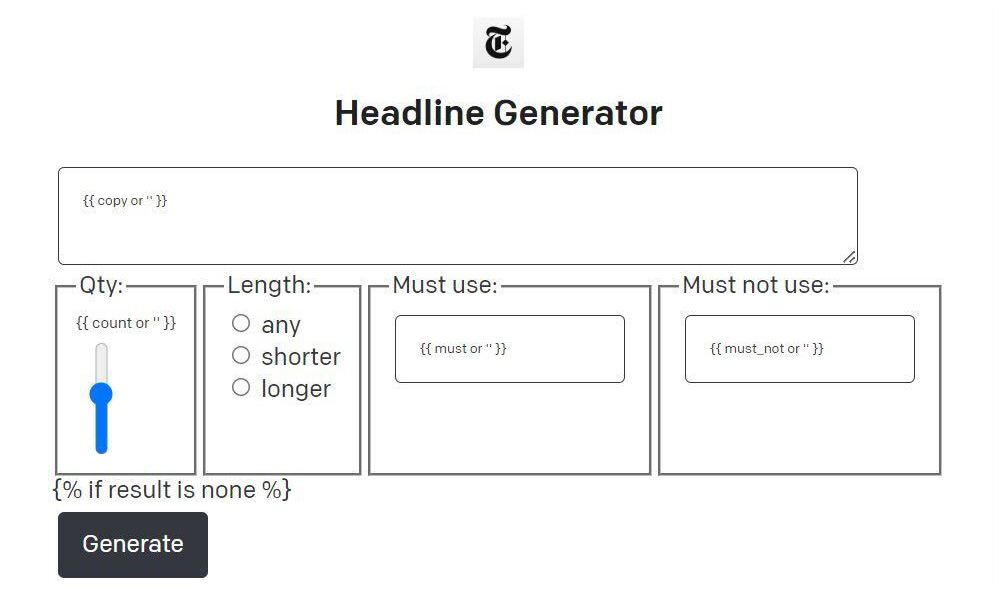

One of the New York Times’s AI projects, titled “OpenAI Styleguide,” is described in its accompanying documentation as a “prototype using OpenAI to help apply The New York Times styleguide.” The project also includes a headline generator.

The style guide checker utilizes an OpenAI large language model known as Davinci to correct all errors in an article’s headline, byline, dateline, or copy — the text of the article — that violate the Times’s style guide. The prompt tells the OpenAI bot, “Do not improvise. Do not use rules you may have learned elsewhere.”

A copy of the Times style guide resides in a separate repository, which is also available in the leak.

The headline generator component of the project tells OpenAI: “You are a headline writter [sic] for The New York Times. Use the following Article as context. Do not use prior knowledge.” The generator also allows the operator to impose several constraints, such as specifying which words must and must not be used.

“Generate a numbered list of three sober, sophisticated, straight news headline based on the first three paragraphs of the following story.”

The OpenAI Styleguide project is not the only instance the Times has experimented with using OpenAI for headline generation. Another repository contains a separate headline generation project using an OpenAI chatbot called ChatGPT. The project was part of a Maker Week at the Times, in which staff work on “self-directed projects.” The Maker Week headline generator tells the bot: “Generate a numbered list of three sober, sophisticated, straight news headline based on the first three paragraphs of the following story.”

Another Maker Week project that uses OpenAI tools is “counterpoint,” described as an “application that generates counterpoints to opinion articles.” The project appears to be unfinished, only instructing the bot to extract keywords from an article.

Aside from OpenAI Styleguide, the Times Github leak contains source code for various applications. Some are research projects — “an effort to build a predictive model of print subscription attrition” — and others cover things like technical job interview test questions, staff training materials, authentication credentials, prototypes for unreleased games, and various personal information.

Correction: July 10, 2024

Due to an editing error, this story has been updated to remove a reference to mistakes in a Daily Mail article that online critics said were AI generated. The Daily Mail later attributed the mistakes to “regrettable human error.”