The Israeli military has reportedly implemented a facial recognition dragnet across the Gaza Strip, scanning ordinary Palestinians as they move throughout the ravaged territory, attempting to flee the ongoing bombardment and seeking sustenance for their families.

The program relies on two different facial recognition tools, according to the New York Times: one made by the Israeli contractor Corsight, and the other built into the popular consumer image organization platform offered through Google Photos. An anonymous Israeli official told the Times that Google Photos worked better than any of the alternative facial recognition tech, helping the Israelis make a “hit list” of alleged Hamas fighters who participated in the October 7 attack.

The mass surveillance of Palestinian faces resulting from Israel’s efforts to identify Hamas members has caught up thousands of Gaza residents since the October 7 attack. Many of those arrested or imprisoned, often with little or no evidence, later said they had been brutally interrogated or tortured. In its facial recognition story, the Times pointed to Palestinian poet Mosab Abu Toha, whose arrest and beating at the hands of the Israeli military began with its use of facial recognition. Abu Toha, later released without being charged with any crime, told the paper that Israeli soldiers told him his facial recognition-enabled arrest had been a “mistake.”

Putting aside questions of accuracy — facial recognition systems are notorious less accurate on nonwhite faces — the use of Google Photos’s machine learning-powered analysis features to place civilians under military scrutiny, or worse, is at odds with the company’s clearly stated rules. Under the header “Dangerous and Illegal Activities,” Google warns that Google Photos cannot be used “to promote activities, goods, services, or information that cause serious and immediate harm to people.”

“Facial recognition surveillance of this type undermines rights enshrined in international human rights law.”

Asked how a prohibition against using Google Photos to harm people was compatible with the Israel military’s use of Google Photos to create a “hit list,” company spokesperson Joshua Cruz declined to answer, stating only that “Google Photos is a free product which is widely available to the public that helps you organize photos by grouping similar faces, so you can label people to easily find old photos. It does not provide identities for unknown people in photographs.” (Cruz did not respond to repeated subsequent attempts to clarify Google’s position.)

It’s unclear how such prohibitions — or the company’s long-standing public commitments to human rights — are being applied to Israel’s military.

“It depends how Google interprets ‘serious and immediate harm’ and ‘illegal activity,’ but facial recognition surveillance of this type undermines rights enshrined in international human rights law — privacy, non-discrimination, expression, assembly rights, and more,” said Anna Bacciarelli, the associate tech director at Human Rights Watch. “Given the context in which this technology is being used by Israeli forces, amid widespread, ongoing, and systematic denial of the human rights of people in Gaza, I would hope that Google would take appropriate action.”

Doing Good or Doing Google?

In addition to its terms of service ban against using Google Photos to cause harm to people, the company has for many years claimed to embrace various global human rights standards.

“Since Google’s founding, we’ve believed in harnessing the power of technology to advance human rights,” wrote Alexandria Walden, the company’s global head of human rights, in a 2022 blog post. “That’s why our products, business operations, and decision-making around emerging technologies are all informed by our Human Rights Program and deep commitment to increase access to information and create new opportunities for people around the world.”

This deep commitment includes, according to the company, upholding the Universal Declaration of Human Rights — which forbids torture — and the U.N. Guiding Principles on Business and Human Rights, which notes that conflicts over territory produce some of the worst rights abuses.

The Israeli military’s use of a free, publicly available Google product like Photos raises questions about these corporate human rights commitments, and the extent to which the company is willing to actually act upon them. Google says that it endorses and subscribes to the U.N. Guiding Principles on Business and Human Rights, a framework that calls on corporations to “to prevent or mitigate adverse human rights impacts that are directly linked to their operations, products or services by their business relationships, even if they have not contributed to those impacts.”

Walden also said Google supports the Conflict-Sensitive Human Rights Due Diligence for ICT Companies, a voluntary framework that helps tech companies avoid the misuse of their products and services in war zones. Among the document’s many recommendations are for companies like Google to consider “Use of products and services for government surveillance in violation of international human rights law norms causing immediate privacy and bodily security impacts (i.e., to locate, arrest, and imprison someone).” (Neither JustPeace Labs nor Business for Social Responsibility, which co-authored the due-diligence framework, replied to a request for comment.)

“Google and Corsight both have a responsibility to ensure that their products and services do not cause or contribute to human rights abuses,” said Bacciarelli. “I’d expect Google to take immediate action to end the use of Google Photos in this system, based on this news.”

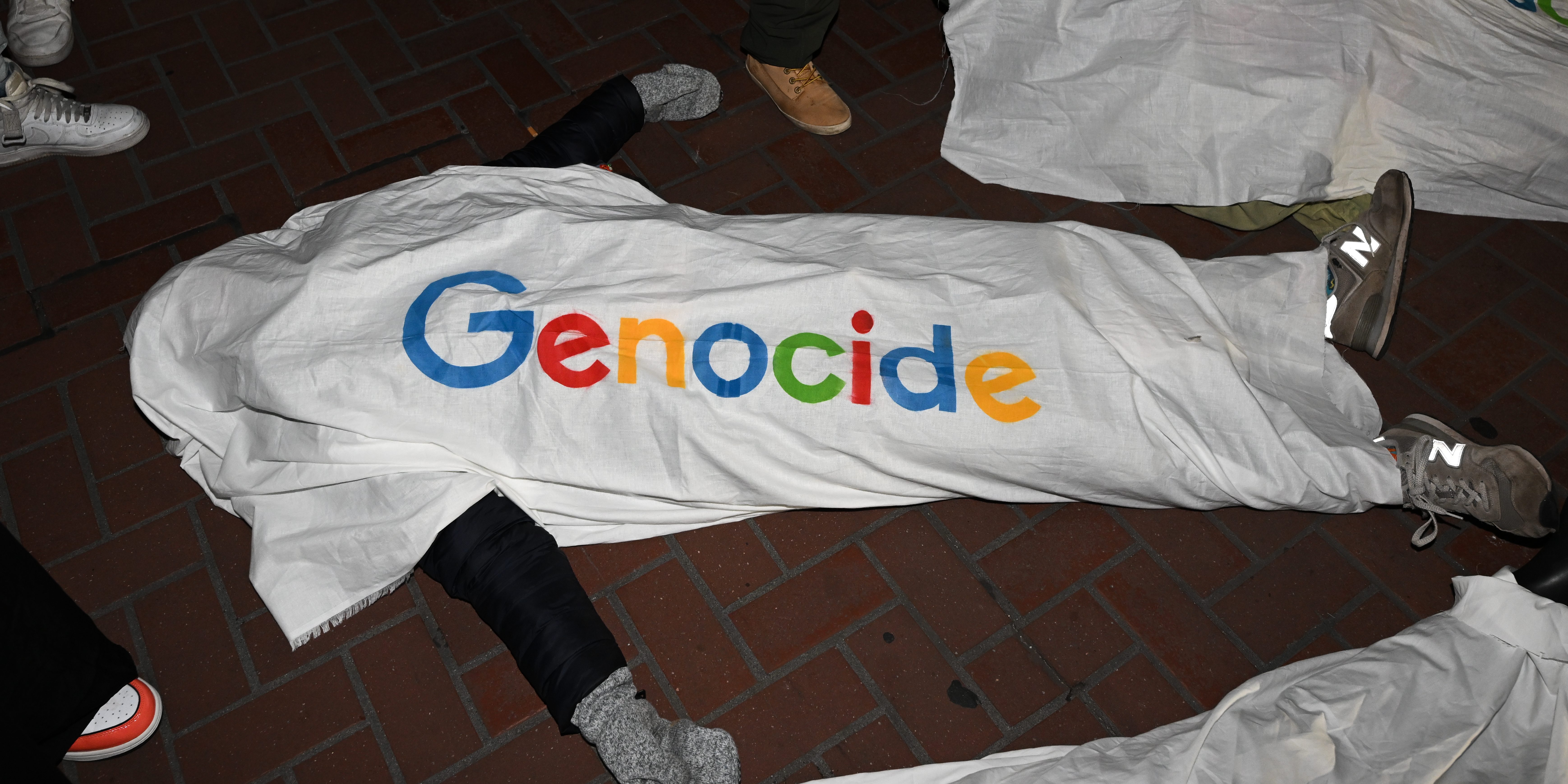

Google employees taking part in the No Tech for Apartheid campaign, a worker-led protest movement against Project Nimbus, called their employer to prevent the Israeli military from using Photos’s facial recognition to prosecute the war in Gaza.

“That the Israeli military is even weaponizing consumer technology like Google Photos, using the included facial recognition to identify Palestinians as part of their surveillance apparatus, indicates that the Israeli military will use any technology made available to them — unless Google takes steps to ensure their products don’t contribute to ethnic cleansing, occupation, and genocide,” the group said in a statement shared with The Intercept. “As Google workers, we demand that the company drop Project Nimbus immediately, and cease all activity that supports the Israeli government and military’s genocidal agenda to decimate Gaza.”

Project Nimbus

This would not be the first time Google’s purported human rights principles contradict its business practices — even just in Israel. Since 2021, Google has sold the Israeli military advanced cloud computing and machine learning-tools through its controversial “Project Nimbus” contract.

Unlike Google Photos, a free consumer product available to anyone, Project Nimbus is a bespoke software project tailored to the needs of the Israeli state. Both Nimbus and Google Photos’s face-matching prowess, however, are products of the company’s immense machine-learning resources.

The sale of these sophisticated tools to a government so regularly accused of committing human rights abuses and war crimes stands in opposition to Google’s AI Principles. The guidelines forbid AI uses that are likely to cause “harm,” including any application “whose purpose contravenes widely accepted principles of international law and human rights.”

Google has previously suggested its “principles” are in fact far narrower than they appear, applying only to “custom AI work” and not the general use of its products by third parties. “It means that our technology can be used fairly broadly by the military,” a company spokesperson told Defense One in 2022.

How, or if, Google ever turns its executive-blogged assurances into real-world consequences remains unclear. Ariel Koren, a former Google employee who said she was forced out of her job in 2022 after protesting Project Nimbus, placed Google’s silence on the Photos issue in a broader pattern of avoiding responsibility for how its technology is used.

“It is an understatement to say that aiding and abetting a genocide constitutes a violation of Google’s AI principles and terms of service,” Koren, now an organizer with No Tech for Apartheid, told The Intercept. “Even in the absence of public comment, Google’s actions have made it clear that the company’s public AI ethics principles hold no bearing or weight in Google Cloud’s business decisions, and that even complicity in genocide is not a barrier to the company’s ruthless pursuit of profit at any cost.”